This document is relevant for: Inf1

The Neuron Scheduler Extension is a Kubernetes scheduler plugin that provides intelligent, topology-aware scheduling for Neuron workloads. While the device plugin handles basic resource allocation, the scheduler extension optimizes Pod placement by considering Neuron core topology, NeuronCore-to-NeuronCore connectivity, and workload requirements. It ensures efficient utilization of Neuron devices by placing Pods on nodes where the requested Neuron cores are optimally configured. This component is optional and primarily beneficial for workloads that require specific subsets of Neuron devices or cores rather than consuming all available resources on a node.

The scheduler extension is required for scheduling Pods that request more than one Neuron core or device resource. It finds sets of directly connected devices with minimal communication latency when scheduling containers, ensuring optimal performance for multi-device workloads.

For a graphical depiction of how the Neuron Scheduler Extension works, see Neuron Scheduler Extension Flow Diagram.

Device Allocation by Instance Type

The Neuron Scheduler Extension applies topology-aware scheduling rules based on instance type to ensure consistent and high performance regardless of which cores and devices are assigned to containers.

Inf1 and Inf2 Instances (Ring Topology)

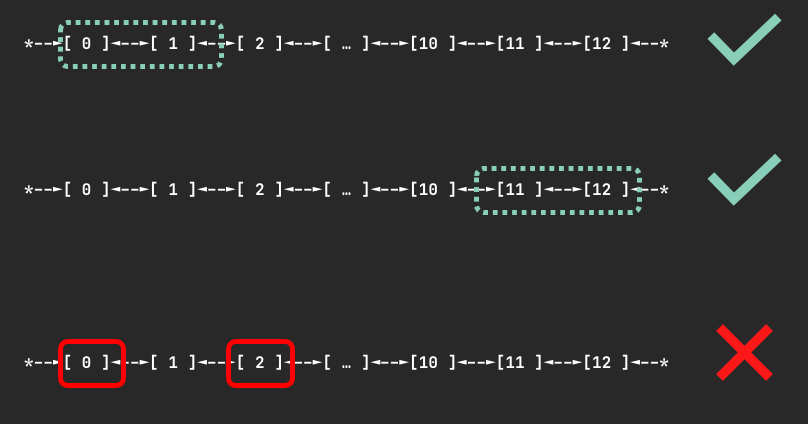

Devices are connected through a ring topology with no restrictions on the number of devices requested (as long as it is fewer than the total devices on a node). When N devices are requested, the scheduler finds a node where N contiguous devices are available to minimize communication latency. It will never allocate non-contiguous devices to the same container.

For example, when a container requests 3 Neuron devices, the scheduler might assign devices 0, 1, 2 if available, but never devices 0, 2, 4 because those devices are not directly connected.

The figure below shows examples of device sets on an Inf2.48xlarge node that could be assigned to a container requesting 2 devices:

Trn1.32xlarge and Trn1n.32xlarge Instances (2D Torus Topology)

Devices are connected via a 2D torus topology. The scheduler enforces that containers request 1, 4, 8, or all 16 devices. If your container requires a different number of devices (such as 2 or 5), we recommend using an Inf2 instance instead to benefit from more flexible topology support.

If you request an invalid number of devices (such as 7), your Pod will not be scheduled and you will receive a warning:

Instance type trn1.32xlarge does not support requests for device: 7. Please request a different number of devices.

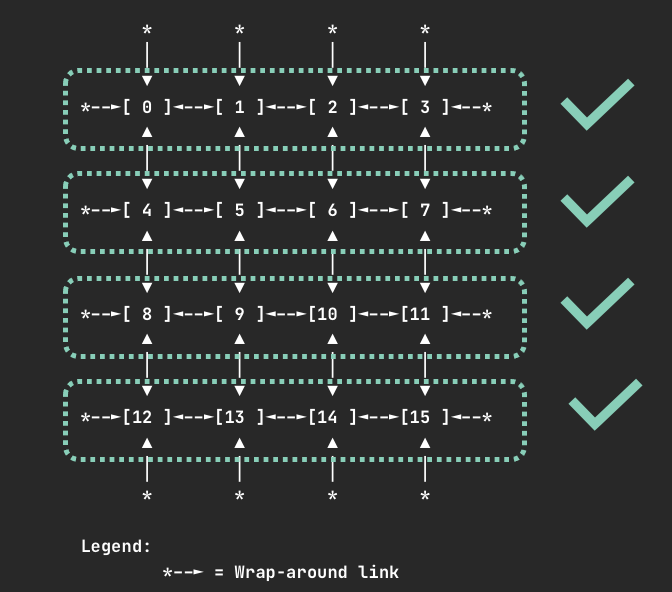

When requesting 4 devices, your container will be allocated one of the following device sets if available:

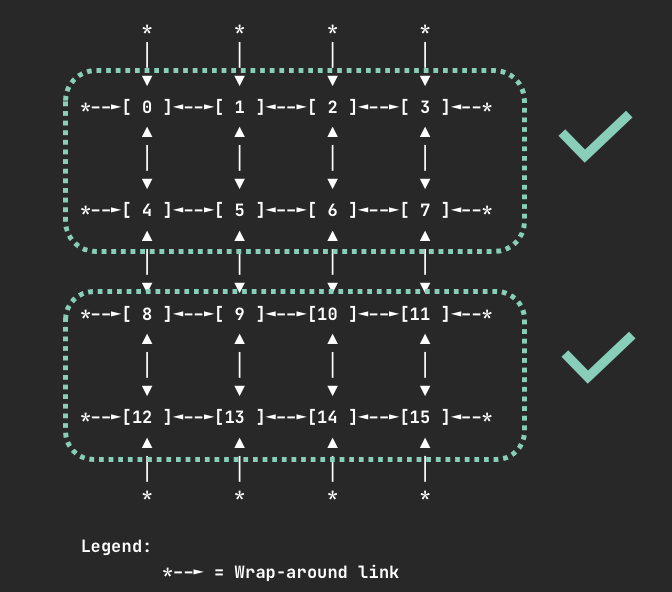

When requesting 8 devices, your container will be allocated one of the following device sets if available:

Note

For all instance types, requesting one or all Neuron cores or devices is always valid.

Deploy Neuron Scheduler Extension

This approach deploys a separate scheduler alongside the default Kubernetes scheduler. This is useful in environments where you don’t have access to modify the default scheduler configuration, such as Amazon EKS.

In this setup, a new scheduler (my-scheduler) is deployed with the Neuron Scheduler Extension integrated. Pods that need to run Neuron workloads specify this custom scheduler in their configuration.

Note

Amazon EKS does not natively support modifying the default scheduler, so this multiple scheduler approach is required for EKS environments.

Prerequisites

Ensure that the Neuron Device Plugin is running.

Step 1: Install Neuron Scheduler Extension

Install the Neuron Scheduler Extension as a custom scheduler:

helm upgrade --install neuron-helm-chart oci://public.ecr.aws/neuron/neuron-helm-chart \

--set "scheduler.enabled=true" \

--set "npd.enabled=false"

Step 2: Verify Installation

Check that there are no errors in the my-scheduler pod logs and that the k8s-neuron-scheduler pod is bound to a node:

kubectl logs -n kube-system my-scheduler-79bd4cb788-hq2sq

Expected output:

I1012 15:30:21.629611 1 scheduler.go:604] "Successfully bound pod to node" pod="kube-system/k8s-neuron-scheduler-5d9d9d7988-xcpqm" node="ip-192-168-2-25.ec2.internal" evaluatedNodes=1 feasibleNodes=1

Step 3: Configure Pods to Use Custom Scheduler

When creating Pods that need to use the Neuron Scheduler Extension, specify my-scheduler as the scheduler name. Here’s a sample Pod specification:

apiVersion: v1

kind: Pod

metadata:

name: <POD_NAME>

spec:

restartPolicy: Never

schedulerName: my-scheduler

containers:

- name: <POD_NAME>

command: ["<COMMAND>"]

image: <IMAGE_NAME>

resources:

limits:

cpu: "4"

memory: 4Gi

aws.amazon.com/neuroncore: 9

requests:

cpu: "1"

memory: 1Gi

Step 4: Verify Scheduling

After running a Neuron workload Pod, verify that the Neuron Scheduler successfully processed the filter and bind requests:

kubectl logs -n kube-system k8s-neuron-scheduler-5d9d9d7988-xcpqm

Expected output for filter request:

2022/10/12 15:41:16 POD nrt-test-5038 fits in Node:ip-192-168-2-25.ec2.internal

2022/10/12 15:41:16 Filtered nodes: [ip-192-168-2-25.ec2.internal]

2022/10/12 15:41:16 Failed nodes: map[]

2022/10/12 15:41:16 Finished Processing Filter Request...

Expected output for bind request:

2022/10/12 15:41:16 Executing Bind Request!

2022/10/12 15:41:16 Determine if the pod %v is NeuronDevice podnrt-test-5038

2022/10/12 15:41:16 Updating POD Annotation with alloc devices!

2022/10/12 15:41:16 Return aws.amazon.com/neuroncore

2022/10/12 15:41:16 neuronDevUsageMap for resource:aws.amazon.com/neuroncore in node: ip-192-168-2-25.ec2.internal is [false false false false false false false false false false false false false false false false]

2022/10/12 15:41:16 Allocated ids for POD nrt-test-5038 are: 0,1,2,3,4,5,6,7,8

2022/10/12 15:41:16 Try to bind pod nrt-test-5038 in default namespace to node ip-192-168-2-25.ec2.internal with &Binding{ObjectMeta:{nrt-test-5038 8da590b1-30bc-4335-b7e7-fe574f4f5538 0 0001-01-01 00:00:00 +0000 UTC <nil> <nil> map[] map[] [] [] []},Target:ObjectReference{Kind:Node,Namespace:,Name:ip-192-168-2-25.ec2.internal,UID:,APIVersion:,ResourceVersion:,FieldPath:,},}

2022/10/12 15:41:16 Updating the DevUsageMap since the bind is successful!

2022/10/12 15:41:16 Return aws.amazon.com/neuroncore

2022/10/12 15:41:16 neuronDevUsageMap for resource:aws.amazon.com/neuroncore in node: ip-192-168-2-25.ec2.internal is [false false false false false false false false false false false false false false false false]

2022/10/12 15:41:16 neuronDevUsageMap for resource:aws.amazon.com/neurondevice in node: ip-192-168-2-25.ec2.internal is [false false false false]

2022/10/12 15:41:16 Allocated devices list 0,1,2,3,4,5,6,7,8 for resource aws.amazon.com/neuroncore

2022/10/12 15:41:16 Allocated devices list [0] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [0] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [0] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [0] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [1] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [1] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [1] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [1] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Allocated devices list [2] for other resource aws.amazon.com/neurondevice

2022/10/12 15:41:16 Return aws.amazon.com/neuroncore

2022/10/12 15:41:16 Succesfully updated the DevUsageMap [true true true true true true true true true false false false false false false false] and otherDevUsageMap [true true true false] after alloc for node ip-192-168-2-25.ec2.internal

2022/10/12 15:41:16 Finished executing Bind Request...

This approach integrates the Neuron Scheduler Extension directly with the Kubernetes default scheduler. This method requires access to modify the default scheduler configuration.

Prerequisites

Ensure that the Neuron Device Plugin is running.

Step 1: Configure kube-scheduler

Enable the kube-scheduler to use a ConfigMap for scheduler policy. In your cluster.yml, update the spec section with the following:

spec:

kubeScheduler:

usePolicyConfigMap: true

Step 2: Launch the Cluster

Create and launch the cluster:

kops create -f cluster.yml

kops create secret --name neuron-test-1.k8s.local sshpublickey admin -i ~/.ssh/id_rsa.pub

kops update cluster --name neuron-test-1.k8s.local --yes

Step 3: Install Neuron Scheduler Extension

Install the Neuron Scheduler Extension and register it with kube-scheduler:

helm upgrade --install neuron-helm-chart oci://public.ecr.aws/neuron/neuron-helm-chart \

--set "scheduler.enabled=true" \

--set "scheduler.customScheduler.enabled=false" \

--set "scheduler.defaultScheduler.enabled=true" \

--set "npd.enabled=false"

Troubleshooting

Warning

Neuron devices unavailable after scheduler extension restart

If the Neuron scheduler extension is restarted, upgraded, or reinstalled while Neuron pods are being deleted or completing, the per-node device allocation annotations may become stale. This can cause Neuron devices to appear allocated to pods that no longer exist, preventing new pods from being scheduled onto those devices.

Symptoms:

New pods requesting Neuron resources remain in

Pendingstate.Scheduler extension logs indicate insufficient Neuron devices on nodes that should have available capacity.

To resolve this, remove the stale allocation annotations from affected nodes. The scheduler extension will regenerate them automatically on the next pod scheduling event.

kubectl annotate node <node-name> NEURON_DEV_USAGE_MAP- NEURON_CORE_USAGE_MAP-

This document is relevant for: Inf1